Rows: 34,576

Columns: 3

$ id <chr> "AquaDelight Inc and Son's", "BaringoAmerica Marine Ges.m.b…

$ shpcountry <chr> "Polarinda", NA, "Oceanus", NA, "Oceanus", "Kondanovia", NA…

$ rcvcountry <chr> "Oceanus", NA, "Oceanus", NA, "Oceanus", "Utoporiana", NA, …

Rows: 5,464,378

Columns: 8

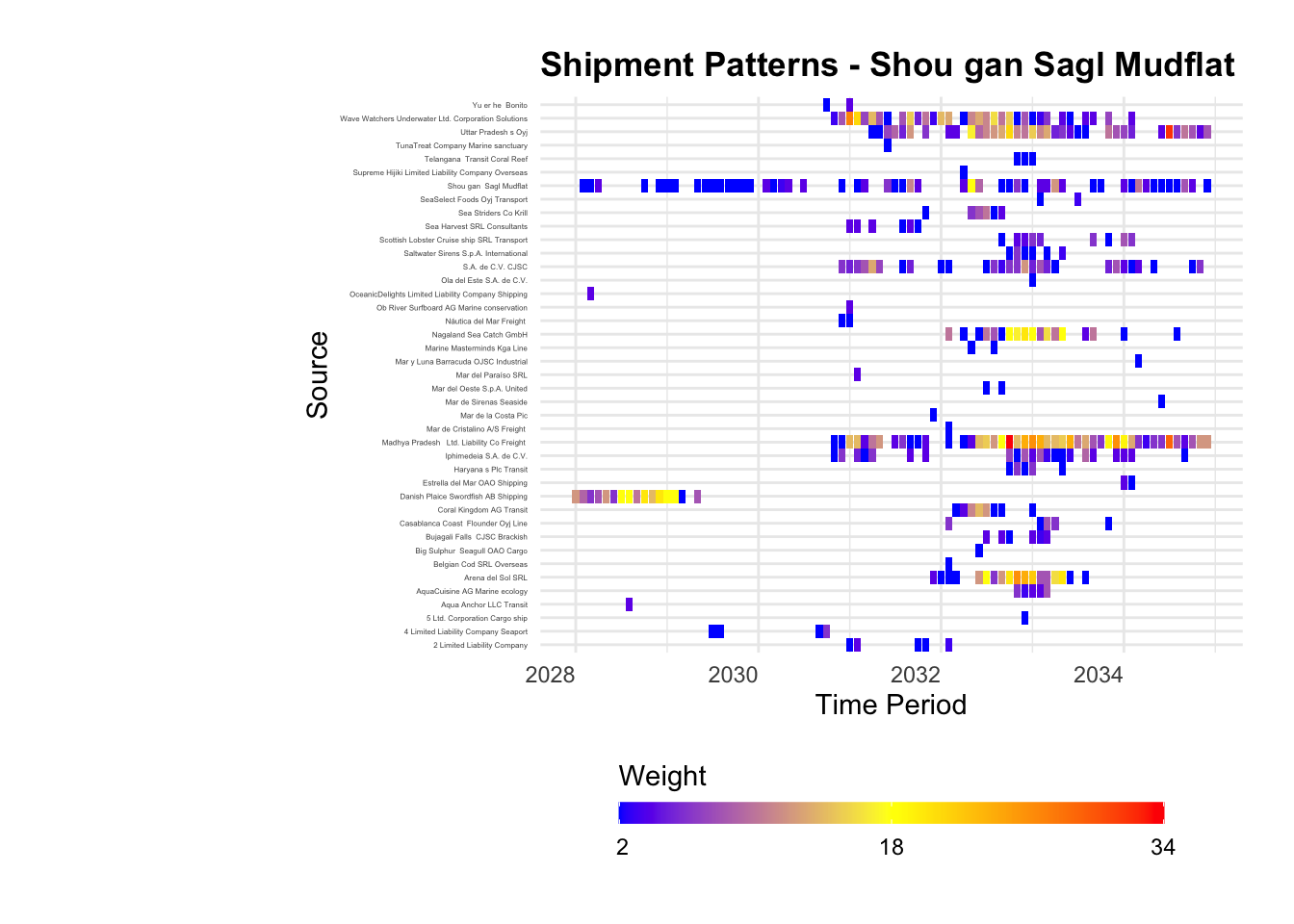

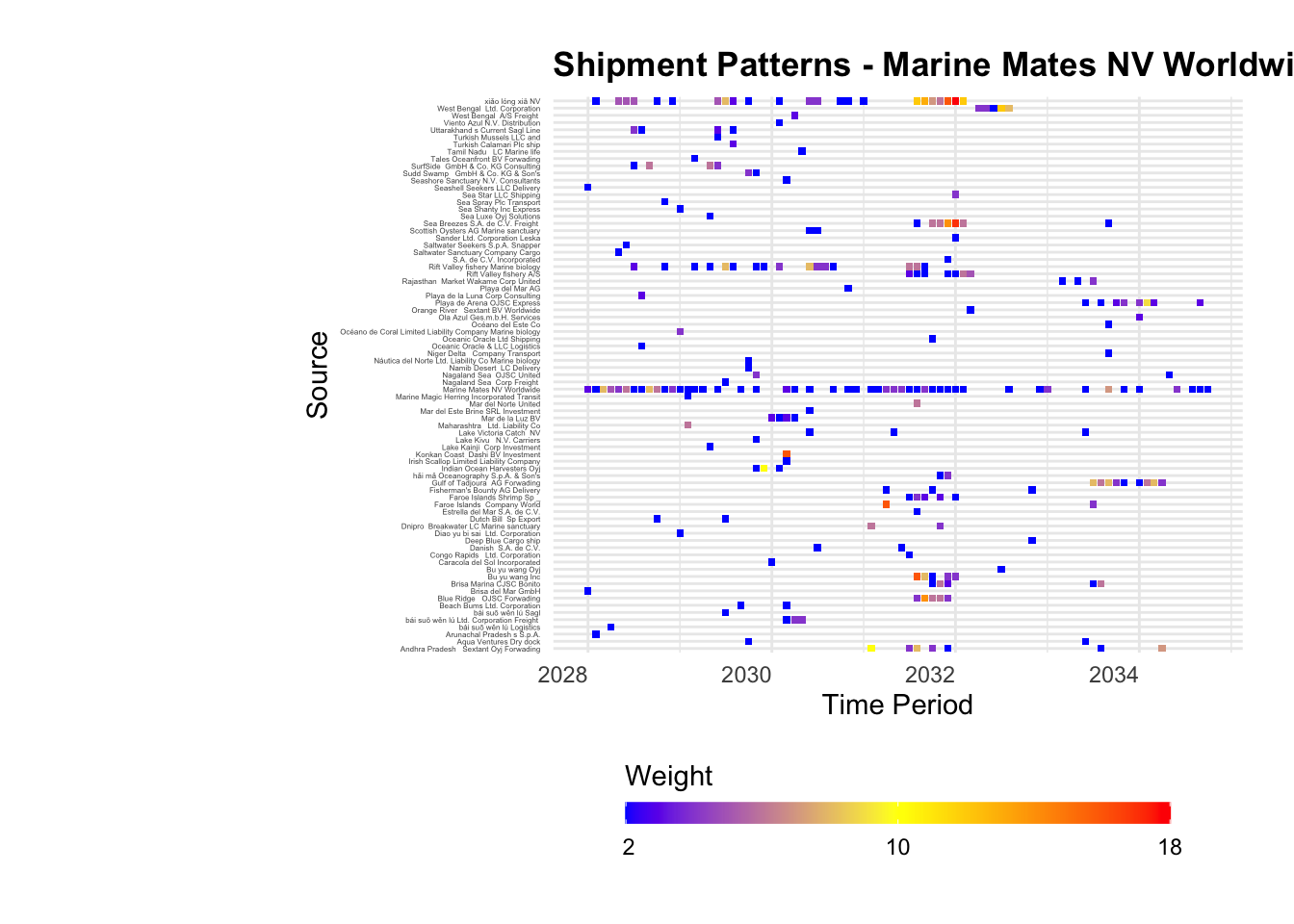

$ source <chr> "AquaDelight Inc and Son's", "AquaDelight Inc and Son…

$ target <chr> "BaringoAmerica Marine Ges.m.b.H.", "BaringoAmerica M…

$ arrivaldate <chr> "2034-02-12", "2034-03-13", "2028-02-07", "2028-02-23…

$ hscode <chr> "630630", "630630", "470710", "470710", "470710", "47…

$ weightkg <int> 4780, 6125, 10855, 11250, 11165, 11290, 9000, 19490, …

$ valueofgoods_omu <dbl> 141015, 141015, NA, NA, NA, NA, NA, NA, NA, NA, NA, N…

$ valueofgoodsusd <dbl> NA, NA, NA, NA, NA, NA, 87110, 188140, NA, 221110, 58…

$ volumeteu <dbl> 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,…